RememberWhen

An AI-powered memory curation system that transforms scattered photo libraries into intentional legacies. Through location proximity, music associations, and temporal patterns, RememberWhen surfaces memories contextually—then enables collaborative archiving where friends and family enrich shared moments with annotations, playlists, and stories.

Context

ArtCenter MDes | Grad Capstone | Thesis Advising: Elise Co

Role

Researcher, Tester, Prototype-er

Tools

Claude Artifacts, Analog Notetaking & Prototyping, Figma

Timeframe

Full Semester (15 Weeks)

Deliverables

Thesis Paper, Prototypes, Pitch Deck, Video Capstone Presentation

The Challenge

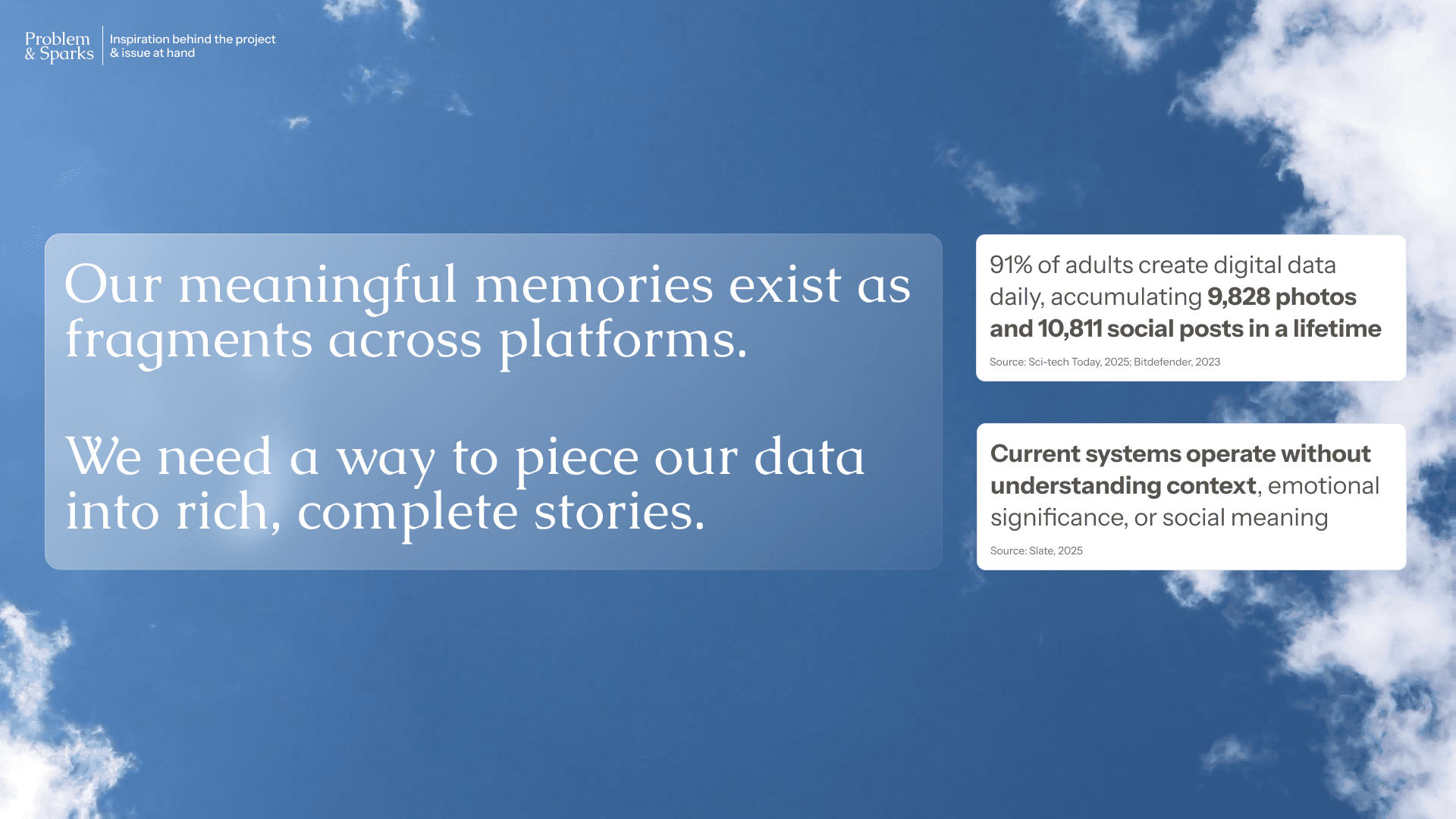

Your phone remembers everything. It understands almost nothing.

The average person accumulates roughly 9,800 photos, 10,800 social media posts, and 126 email addresses over a lifetime. We generate about 1.7 MB of data every second. And yet, 97% of older adults leave behind completely unorganized digital legacies.

Our devices surface "memories" based on dates alone. Apple's algorithm once titled a user's memory reel "Best of the last 2 months" and opened on a photo of a discarded Craisins box on a patch of grass. The system had decided that was a significant moment worth remembering. These algorithmic flashbacks strip images of vital context: the people, the feeling, the reason you took the photo in the first place.

RememberWhen started with a question: what if we could help people intentionally curate their digital lives before that data becomes an inheritance problem?

Process: Immersing in a New Domain

Research that went deep, wide, and sometimes into science fiction

This subject matter was entirely new to me. I don't have a background in thanatechnology or grief studies. That was part of the appeal. When I encounter unfamiliar territory, my approach is: find the major players, discover examples in media (fictional too!), and understand who's at stake.

Secondary research pulled from three directions. Academic literature on thanatechnology and digital legacy established the theoretical foundation. Third direction, Market reports from Gartner and Grand View Research, validated the scale of the problem: the digital legacy market had North America representing over 37% market share in 2024, with significant projected growth. And competitive analysis revealed existing solutions as early like 2011's Pummelvision and the emerging app Retro, both attempting to add context to captured memories.

The research also surfaced genuinely unsettling territory. I studied how AI-generated avatars of deceased loved ones were being deployed in China, and how NPR reported on families using AI to let murder victims "speak" in court. I looked at how Black Mirror's "Be Right Back" explored the grief implications of digital replication, and how the Afterlife bar in the Cyberpunk universe imagined a world where memory data becomes currency. These references revealed how emerging technologies can manipulate the way we grieve, potentially deferring closure and disrupting the psychological arc of mourning.

Primary research included interviews with people who shared their personal efforts and experiences in the act of remembering. I spoke with individuals across different levels of "data archiving" motivation, from people who barely think about their digital footprint to those who actively curate it. These conversations shaped my understanding of what types of memories people actually value preserving, and how deeply personal the act of curation is.

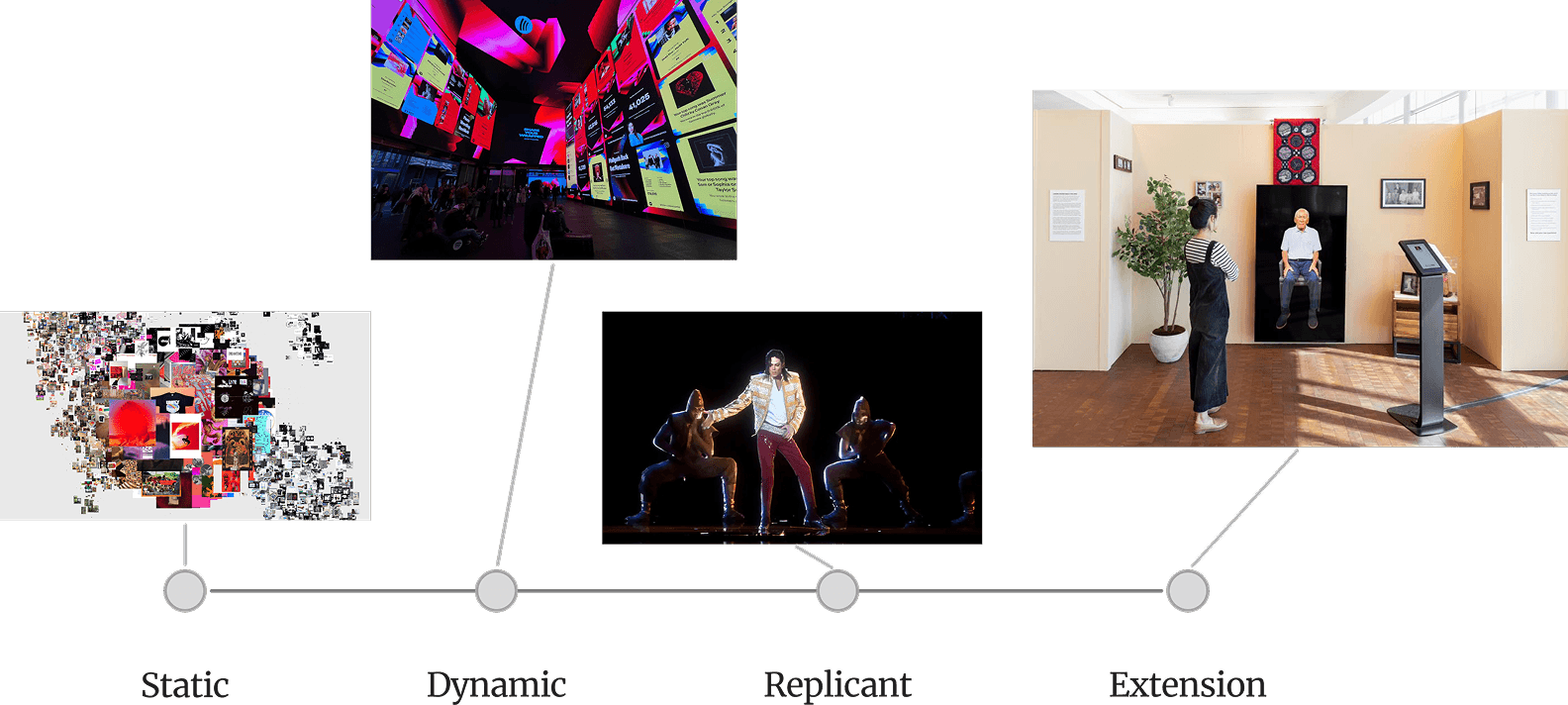

From this research, I proposed the Digital Presence Spectrum, a framework for mapping how applications relate to thanatechnology along four stages: Static, Dynamic, Replicant, and Extension. This became the project's original thesis contribution, later published on ArtCenter's MDes IxD Medium publication.

The Messy Middle: Exploration Prototypes

Prototypes that explored, failed, and taught

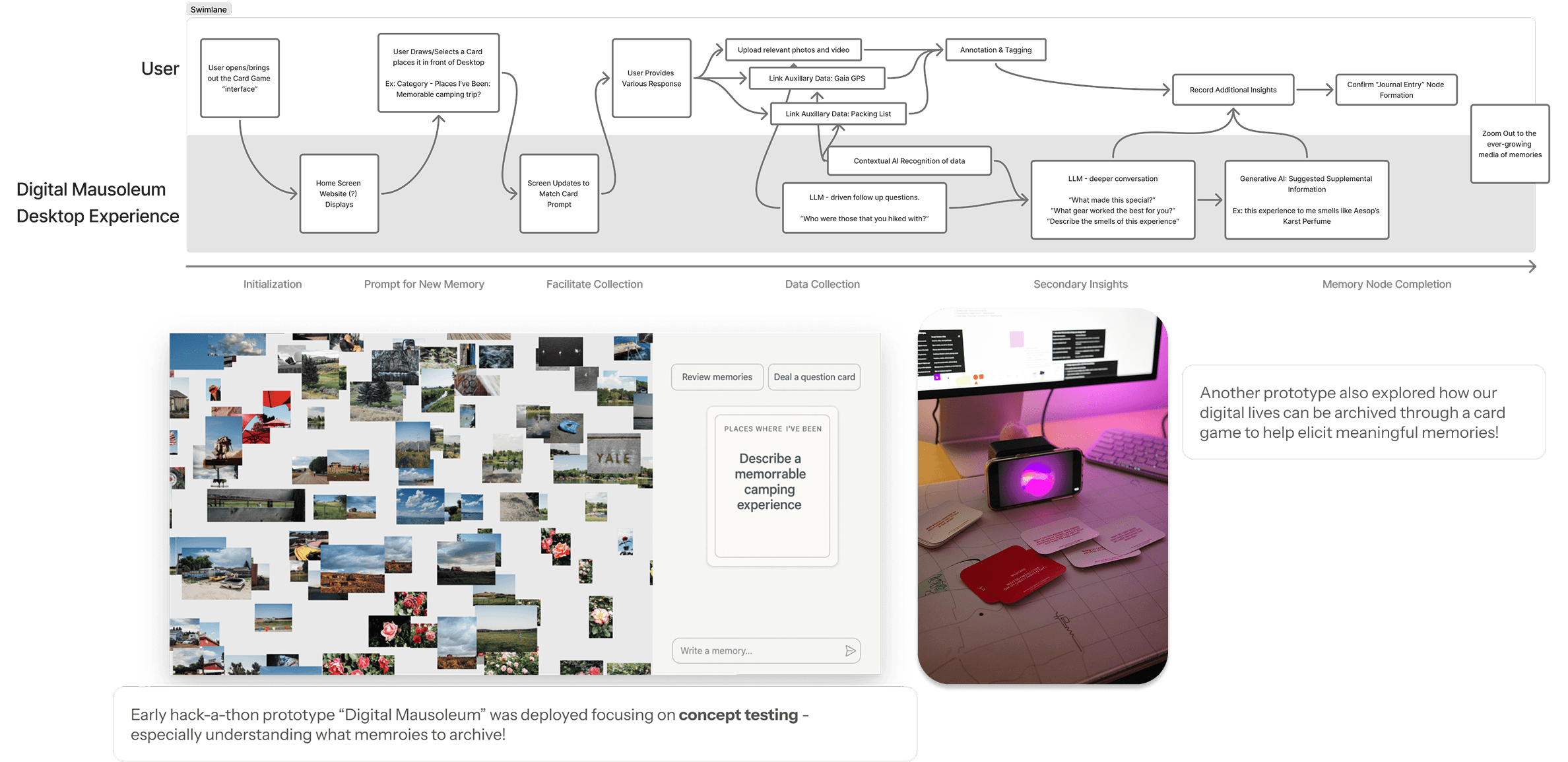

Before RememberWhen even existed in the video seen above, I explored the problem space through a series of concept excursions. The capstone's double-diamond process encouraged this: stay in the messy middle, diverge before converging.

The first ever prototype, "Digital Mausoleum," was a flow diagram exploring how a user might "register" a memory based on onscreen card prompts, with follow-up cards that help dig into a memory further. I even sketched a physical card game version to test what questions people naturally ask when archiving memories ("Favorite go-to recipes?" "A trip that changed your perspective?"). A hackathon week, as early as week 3, produced "Sanctum," which gave the Digital Mausoleum concept interactive life and yielded early feedback on what types of memories people want to preserve.

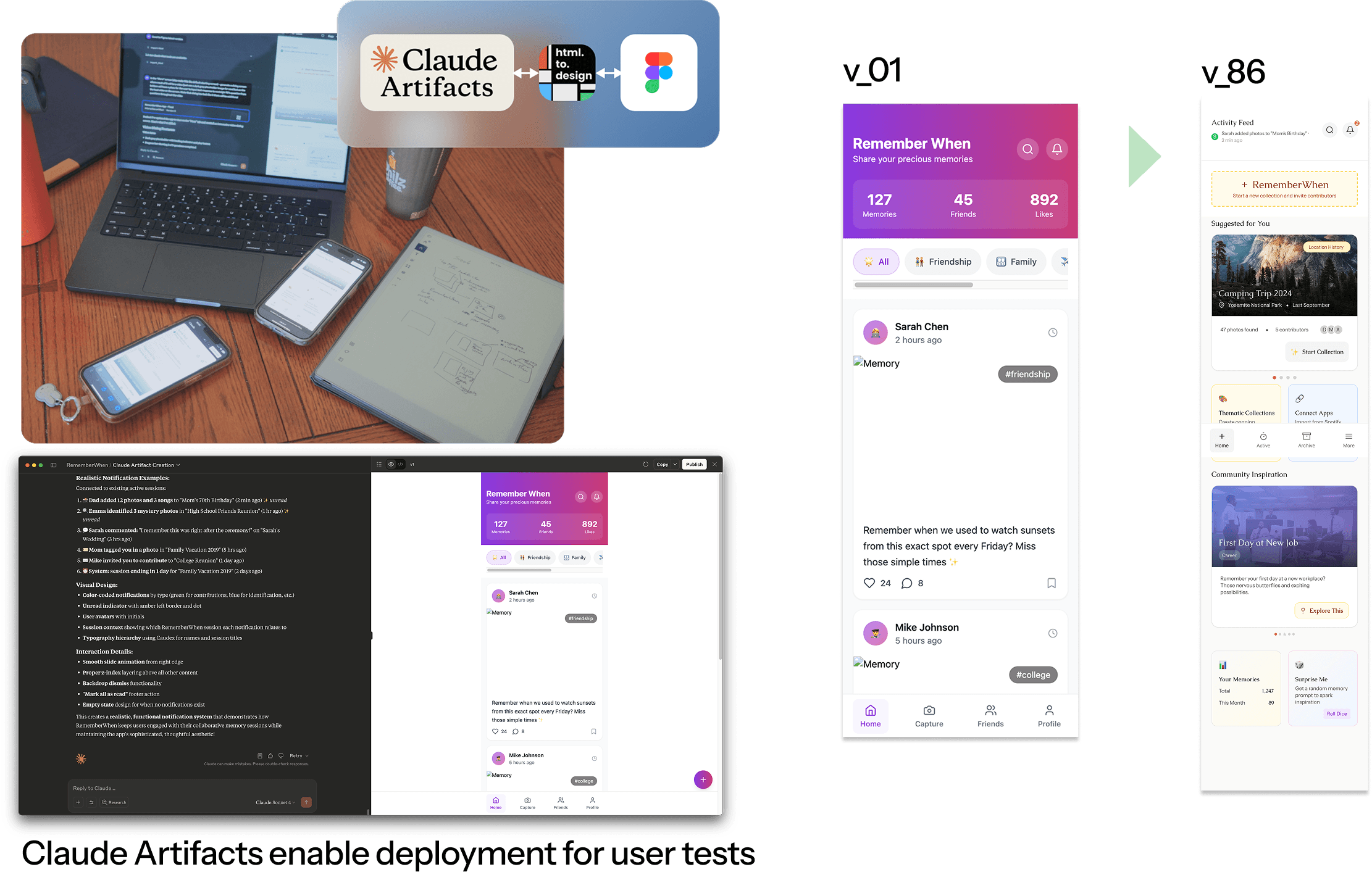

Vibe-coding with Claude Artifacts: v1 to v86

RememberWhen iterations steered towards a mobile application. I took a daring risk and adopted an AI-first prototyping workflow using Claude Artifacts, which had literally been released to the public midway through the Summer 2025 semester. Claude Artifacts let me prompt my idea of context-based memory archiving and get a working prototype back. Using the latest-at-the-time Claude Artifacts MCP and html-to-design (as of February 2026 has been replaced with Figma Make and Claude Opus), those same designs were ported to Figma for refinement, reel production, and presentation.

The speed was transformative. I went from v1 to v86 over the course of the project. And because the prototypes were functional and deployable via URL, I could put RememberWhen directly on users' devices during testing sessions. This enabled something I love doing: sitting alongside users and deeply listening to their feedback while adjusting the prototype in real time. Vibe-coding closed the feedback loop faster than any workflow I've used before.

What Users Told Us

Unmoderated testing yielded 3 key findings

I tested with 5 people in 40-minute in-person tabletop sessions, each with varying levels of data archiving motivation. I used unguided scenarios where users could freely explore the Claude Artifact prototype.

Finding 1: Terminology Restructure. Users were confused by overlapping concepts like "Create Session," "Start Session," "Archives," and "Memory Nodes." Testing led to a clean hierarchy: Collections are either Active or Archived. Any single unit of media (one photo, one comment) is a "Memory." The app name itself became the core action verb: "Let's RememberWhen we went camping."

Finding 2: Navigation Simplification. The original session/archive distinction caused confusion. Users guided the redesign toward a simplified 4-tab structure: Home (with AI suggestions), Active (collaborative collections in progress), Archive (completed collections), and More (settings).

Finding 3: Memory Sources Go Way Beyond Photos. Users told me their meaningful data wasn't limited to photos and videos. They wanted to archive Spotify playlists, voice recordings, workout history, Instagram saved reels, "meme folders," text messages, YouTube bookmarks, and boutique hotel check-ins. One user mentioned video game achievements. This finding fundamentally expanded the multi-modal scope of RememberWhen.

Service Design: Intelligence Beyond "On This Day"

Agentic AI with humans still in the loop

The service design blueprint maps what happens beneath RememberWhen's deceptively simple frontend. While the user sees suggestion cards for experiences like camping trips or family barbecues, the backend orchestrates multiple agentic AI systems: location agents that notice you're near a trailhead you visited last summer, context agents that correlate photo metadata with calendar events, and music-matching agents that recognize songs associated with specific memories.

Applying pluriversal design principles, the system balances algorithmic intelligence with human ways of knowing. Features like guest-hosted "90s kid" community experiences and collaborative navigation of difficult memories demonstrate how AI can be woven together with cultural knowledge and community wisdom. A human curation team can release limited-time community events, keeping the platform grounded in shared human experience rather than purely algorithmic decisions.

Reflection

Range, emotion, and responsibility

I love this project because it has nothing to do with aerospace and yet it's completely me. RememberWhen was a personal reminder that I can courageously lean on my curiosity to rapidly immerse in unfamiliar domains. The research methods, the systems thinking, the user advocacy: those transferred directly. The subject matter was brand new.

Emotion in data matters. Coming from a data science background where objectivity is the standard, this project revealed how our data still holds emotions and how design must have space to honor that. It's a memory storage capacity problem, sure. But it's also about how data can make us feel based on how it's presented. RememberWhen's visual design went through multiple iterations to invoke calm, serenity, and acceptance, visible even in the gentle sky backgrounds of the presentation deck.

Research is humanizing. I had the honor of interviewing people who shared their efforts and experiences in the act of remembering. The emerging death-tech and memory archiving landscape is still unstructured and deeply sensitive. As AI accelerates in parallel, memory preservation and the way we present it requires profound care and responsibility.